I am organising a special session on the upcoming International Symposium on Forecasting with the topic “Forecasting with Combinations and Hierarchies”. Please contact me if you are interested to contribute to this session.

In many applications there are time series that can be hierarchically organised and can be grouped or aggregated in several ways, based on some predefined structure. For example, an organisation may be interested in forecasting a quantity defined by geographical boundaries, which can be modelled at a detailed disaggregate level, or at an aggregate top level. Such problems are typically modelled using hierarchical time series methods: bottom-up, top-down or middle-out. These methods attempt to reconcile the forecasts for the various time series by producing forecasts at a single level of the hierarchy and aggregating or disaggregating, as appropriate to the rest of the hierarchy.

More recently a method based on optimal combinations has been proposed (Hyndman et al., 2011) that has shown to perform favourably against competing hierarchical methods (Athanasopoulos et al., 2009). The idea behind this approach is based on linearly combining predictions from all the time series in the hierarchy, where the weights of the linear combination are defined by the hierarchy imposed by the problem definition. Such combinations are optimal in minimising the reconciliation error, under certain assumptions. Current research has demonstrated that these assumptions are perhaps too strong and different combination weighting schemes have been proposed that empirically perform better (Athanasopoulos et al., 2014), although the best combining scheme remains an open question.

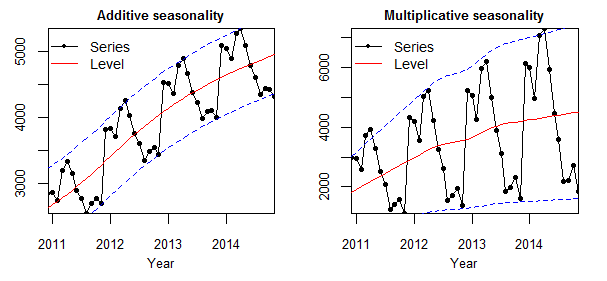

Irrespective of the methodology followed to solve the hierarchical/combination forecasting problem this research is important for practice as multiple organisation and companies face hierarchical cross-sectional problems as defined by products, market segments, geographical boundaries, etc. or combinations of these factors. Recently Kourentzes et al. (2014) proposed that instead of focusing our investigation on cross-sectional hierarchies, we can define hierarchies of temporal nature, which are applicable to any forecasting problem. They showed empirically that using multiple temporal aggregation levels of a time series and combining forecasts produced at each level resulted in predictions that substantially outperformed conventional forecasting approaches. The combination of forecasts across temporal hierarchies allowed for better capturing the various structural components of the time series, as well as mitigating the impact of model misspecification, either in terms of model form or parameters. Therefore, their methodology demonstrated that hierarchical combination problems are relevant to any forecasting application and thus deserve more attention in research, as there is substantial potential for impact to practice.

References

Hyndman, R. J.; Ahmed, R. A.; Athanasopoulos, G. & Shang, H. L. Optimal combination forecasts for hierarchical time series. Computational Statistics and Data Analysis, 2011, 55, 2579-2589

Athanasopoulos, G.; Ahmed, R. A. & Hyndman, R. J. Hierarchical forecasts for Australian domenstic tourism. International Journal of Forecasting, 2009, 25, 146-166

Athanasopoulos, G.; Hyndman, R. J.; Kourentes, N. & Petropoulos, F. Forecasting hierarchical time series. The 2014 Internatinal Symposium on Forecasting, 2014, Rotterdam.

Kourentzes, N.; Petropoulos, F. & Trapero, J. R. Improving forecasting by estimating time series structural components across multiple frequencies. International Journal of Forecasting, 2014, 30, 291-302